AI is already in your employees’ browsers, whether you’ve trained them or not. Your teams are experimenting, trying out prompts, and adapting as they go. That’s exciting, but it can also be messy. Instead of blocking everything or letting chaos reign, you can turn that curiosity into consistent, safe, high-quality work.

This article discusses how employers can train their workforce to safely, ethically, and productively leverage AI tools - without slowing the team down.

Why Train Your Employees for AI?

Employees are already experimenting with AI in everyday tasks such as drafting emails, summarizing meetings, and organizing data. Formal training converts this informal use into consistent, safe, and high-quality practice. It also ensures that your organization benefits from productivity gains that competitors are beginning to systematize. Unstructured use carries clear risks. Sensitive information can be copied into tools that are not approved. This creates exposure under privacy and confidentiality obligations. AI systems can produce confident but inaccurate statements. They may find their way into customer communications or compliance documents. Results vary widely across teams when there are no shared standards. And, the introduction of unapproved tools makes security oversight difficult.

A structured training program addresses these issues.

- It sets clear expectations about where AI may be used, what data may be shared, and when human review is required.

- It establishes repeatable workflows, supported by approved prompts, templates, and quality checks. This lets individual successes become organization-wide improvements.

- It also enables measurement. Leaders can track time saved, quality scores, adoption rates, and the ROI relative to tool and training costs.

- It promotes a responsible culture where employees can innovate without creating unnecessary risk.

For example, a recruiting team might learn an approved process to draft a job description with AI, apply a standardized bias check, and record any edits. The task is completed in minutes rather than hours, the output meets policy requirements, and the activity is auditable.

AI Training Program: Setting Goals Upfront

An AI training program should begin with clear business goals and a simple policy that explains how employees may use these tools. This alignment prevents ad-hoc experiments from creating risk. It also allows leaders to measure progress.

- Define outcomes: Identify three to five objectives and express them as measurable targets. Set a baseline before training so improvements can be tracked.

- Create an AI Use Policy: Publish a short document that answers the practical questions employees will have:

- Scope and approved tools: Which systems are allowed, and for what types of tasks.

- Data handling: What information may never be shared (e.g., personal data, confidential contracts, health records), and what must be redacted.

- Human oversight: When a human must review or sign off, especially for legal, financial, or customer-facing content.

- Attribution and labeling: When to note that AI assisted the work.

- Record-keeping: How drafts, prompts, and final outputs are stored for audit purposes.

- Incident response: How to report suspected data exposure or inaccurate outputs, and who will respond.

- Assign ownership: Name an executive sponsor, a program lead in learning and development, a technical lead from IT or security, and a legal or compliance contact. Appoint team “champions” who can answer routine questions and collect feedback.

- Introduce risk tiers: Classify common tasks as low, medium, or high risk and match each tier with the required controls. For example, low-risk internal drafts may proceed with basic checks. And, high-risk customer communications need documented review.

- Standardize tool approval: Use a brief intake checklist covering data residency, vendor security practices, encryption, authentication, access controls, and contractual protections. Only approved tools should be available to staff accounts.

A one-page summary of these rules can be shared alongside training materials. This gives employees the confidence to use AI effectively while protecting the organization.

Skill Map: Who Needs What

Tier 1: AI Literacy (all employees)

Goal: Safe, competent daily use.

Core skills: approved tools and data rules, basic prompting (clear task, context, constraints), fact-checking, proper record-keeping.

Tier 2: Power Users / Team Champions (selected staff)

Goal: Standardize and lift team productivity.

Core skills: turn recurring tasks into simple workflows and prompts; run small A/B checks to track time/quality; coach colleagues and collect feedback.

Tier 3: Builders / Integrators (IT/Ops/advanced analysts)

Goal: Connect AI to systems under governance.

Core skills: tool evaluation and access control, light integrations/automations with logging, accuracy checks, and defined human sign-off.

Assessment

Brief task-based checks at each tier; simple internal certification tied to permissions; annual refreshers for policy and workflow updates.

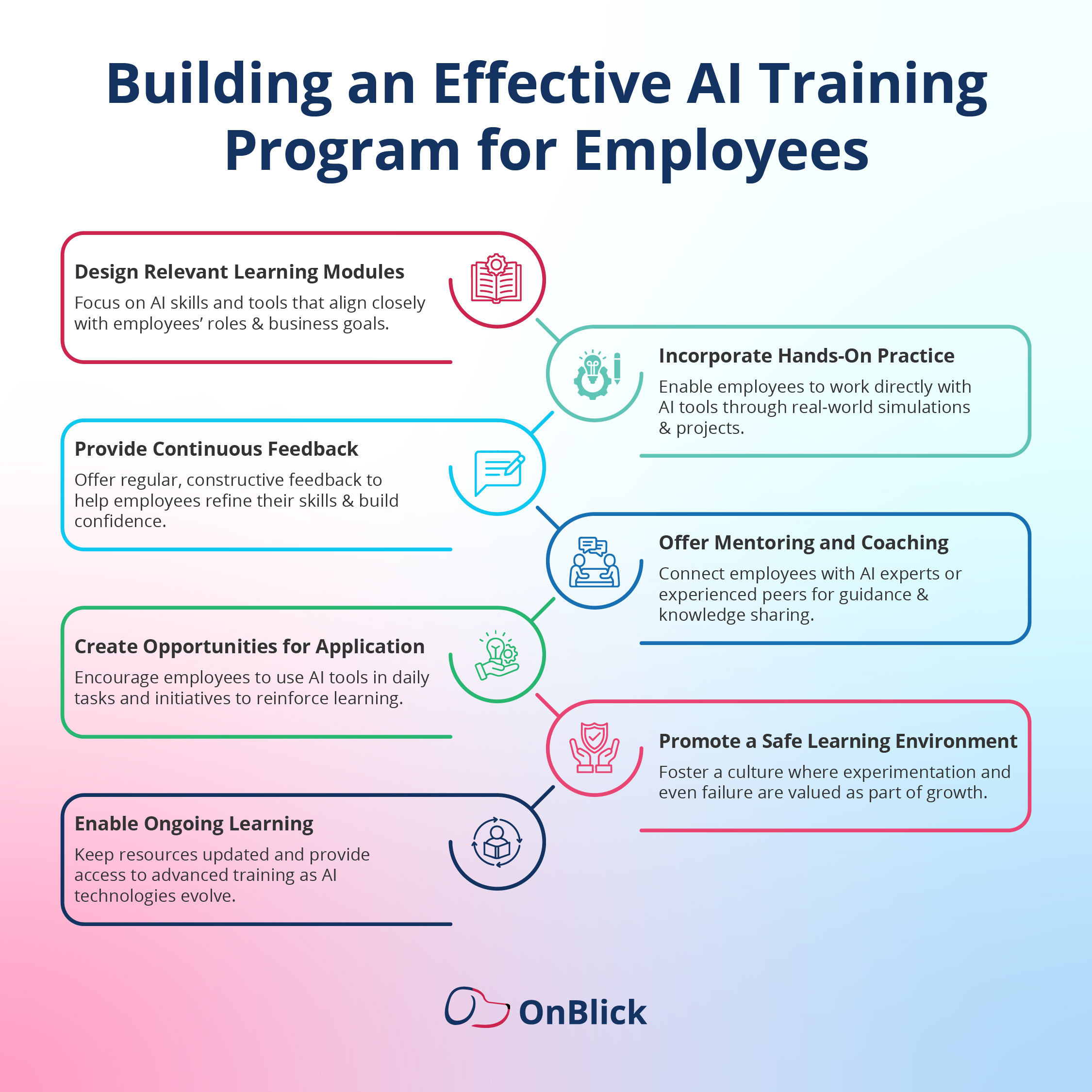

AI Training Program Design

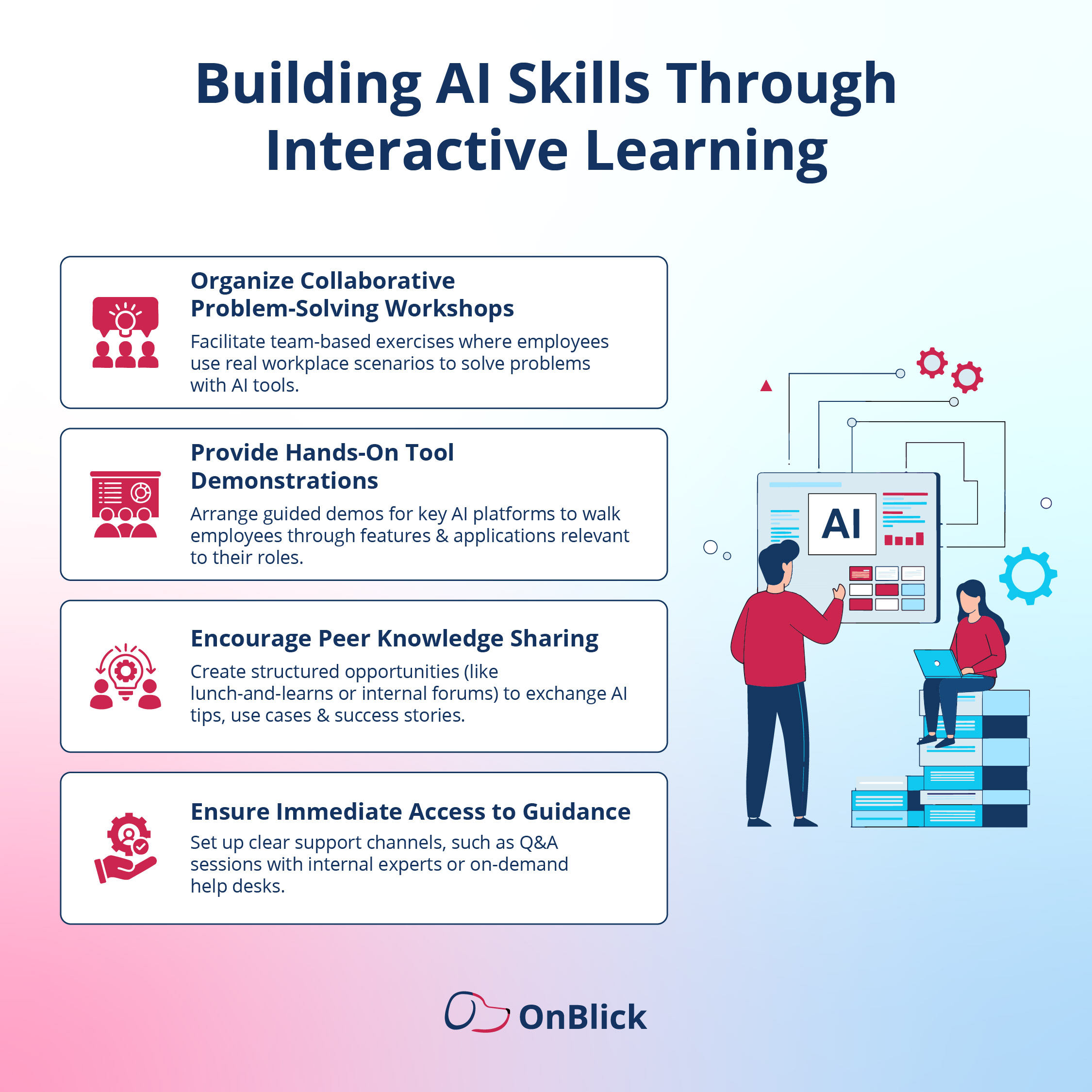

A clear structure helps employees build confidence while the organization maintains control. Combine short lessons, guided practice, and on-the-job application.

Learning formats

- Micro-learning modules (10–15 minutes) that cover policy, approved tools, and basic prompting.

- Live labs using real, low-risk tasks with a facilitator and a checklist for quality and safety.

- Office hours for questions and review of sample outputs.

- Job aids: prompt cards, redaction tips, and SOP checklists stored in a shared library.

30/60/90-day rollout

- Days 1–30: Announce goals and policy; grant access to approved tools; deliver Tier 1 ‘AI Literacy’ to all staff; select 3–5 use cases per function; record baselines for time and quality.

- Days 31–60: Run Tier 2 labs for team champions; publish a small prompt/workflow library; draft SOPs with review steps; hold weekly office hours; begin measuring time saved and error rates.

- Days 61–90: Extend to additional teams; start limited Tier 3 pilots for simple automations with logging and human sign-off; formalize a monthly governance review; publish results and lessons learned.

Assessment and support

- Use brief, task-based checks to confirm competence at each tier.

- Tie internal certification to permissions (e.g., access to prompt libraries or integrations).

- Maintain a single help channel (IT/Security + L&D) for tool questions, incidents, and feedback.

Inclusion and logistics

- Offer recordings and captions, schedule sessions across time zones, and ensure materials are accessible.

- Protect capacity by setting clear time expectations (for example, one hour per week during the first month).

This design builds foundational skills quickly, proves value on real work, and prepares the organization for responsible expansion.

Responsible and Secure AI Use

Responsible use protects people, data, and the organization. Publish clear rules and make them easy to follow.

1) Data protection

- Never input personal identifiers (PII), health/financial data, confidential contracts, source code, or customer secrets.

- Minimize and redact: Share only what is required; remove names, IDs, and sensitive clauses.

- Approved environments only: Use organization-approved tools with SSO, logging, and data retention controls.

- Storage: Save drafts and final outputs in designated folders with access controls and version history.

2) Human oversight

- Define mandatory review for legal, financial, external, or high-risk content.

- Require authors to verify facts, check references, and confirm tone and policy compliance before publishing.

- Label outputs as “AI-assisted” when policy requires.

3) Fairness and quality

- Use bias checks for hiring, performance, and customer communications.

- Prefer transparent prompts and documented criteria over ad-hoc judgment.

- Track accuracy and error rates on recurring tasks; refine prompts or remove use cases that underperform.

4) Compliance and vendor due diligence

- Approve tools through a short checklist: Data residency, encryption, access control, audit logs, vendor security certifications, and a signed data-processing agreement.

- Keep an up-to-date list of approved tools and versions; remove deprecated ones.

5) Incident response

- If sensitive data is exposed or an output causes harm: Stop use, preserve logs, notify the designated contact (IT/Security), and follow the documented remediation steps.

- Conduct a brief post-incident review and update prompts, SOPs, or access as needed.

6) Practical safeguards

- Default to summaries instead of full documents.

- Use redaction templates and watermarks for drafts.

- Limit access to prompt libraries by role; review permissions quarterly.

These foundations allow teams to use AI confidently while meeting security, privacy, and regulatory expectations.

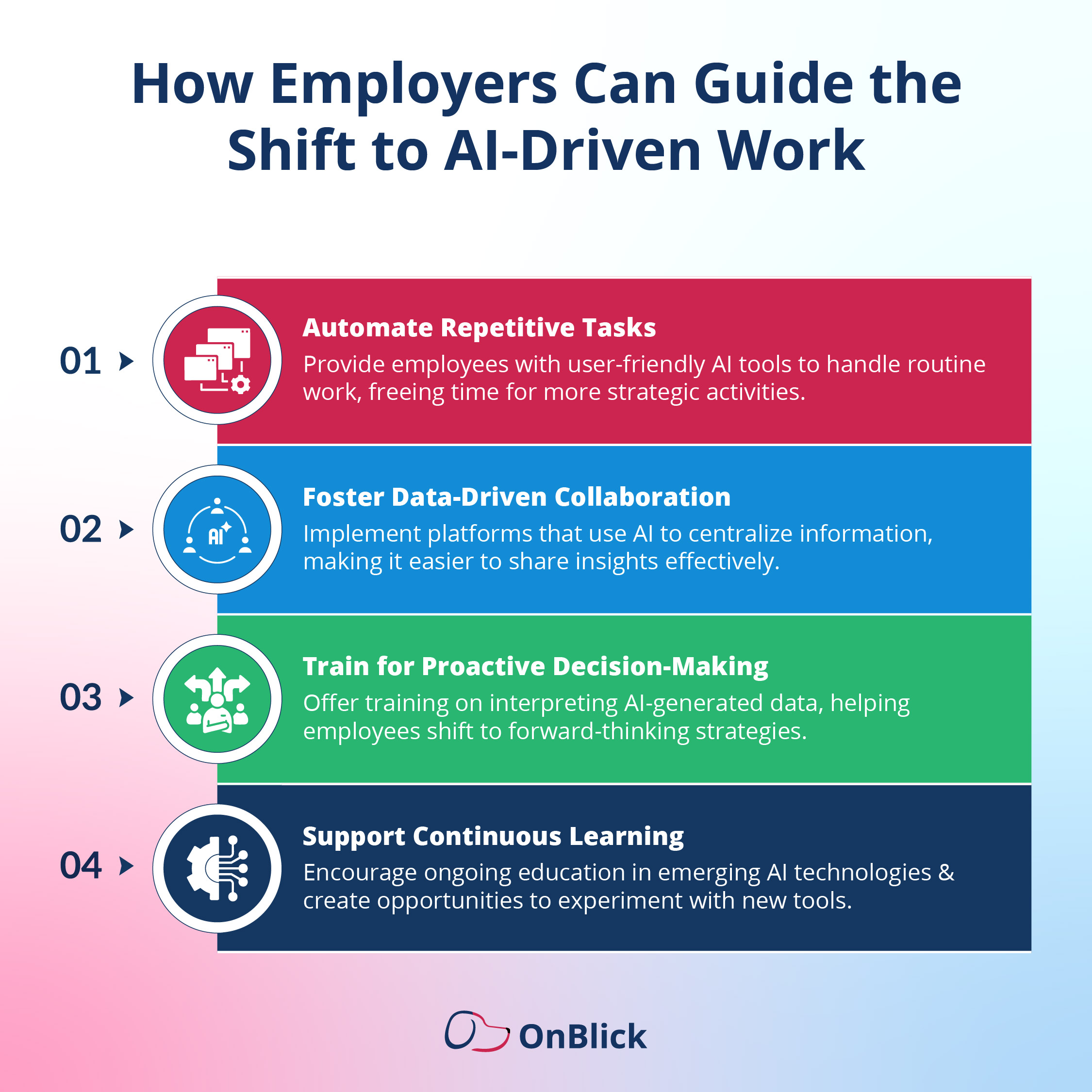

Embed AI into Everyday Workflows

- Create one-page SOPs per use case: purpose, allowed inputs/redaction, 4–6 steps with the approved prompt, reviewer and checks, acceptance criteria, storage/location, owner and version date.

- Update existing templates (emails, reports, tickets) to include an ‘AI-assist’ step and a short verification checklist (facts, tone, policy).

Maintain a small prompt library with example inputs/outputs; tag by function and risk; edit rights for team champions; monthly review.

- Link where work happens (docs, knowledge base, CRM/help desk macros) so staff do not need to search.

- Light governance: monthly SOP/prompt refresh, single help channel for questions/incidents.

- Track simple metrics per use case: time saved, error rate, adoption; share one ‘AI win’ each month to reinforce good practice.

Measure What Matters

- Set a baseline: time per task, error/redo rate, quality score (1–5), current adoption.

- Track three areas:

- Efficiency & quality (minutes saved, error rate, quality).

- Adoption & compliance (active users, uses per approved workflow, SOP adherence).

- Business outcomes (cycle time, CSAT, time-to-fill, report turnaround).

- Collect data simply: Tool logs where available, two self-report fields in templates (“minutes spent”, “review needed?”), monthly sampling by team champions.

- Run small A/Bs: AI-assisted vs. control on the same task and inputs; report minutes saved and quality difference.

- State ROI clearly: (hours saved × loaded hourly rate) − (tool + training time). Review monthly; scale what works, refine or retire what doesn’t.

Summing Up

AI is most useful when it’s guided, not guessed. This article discussed a practical way to bring that guidance to your organization: set clear goals and guardrails, choose a handful of high-impact use cases, match skills to roles, roll out training in a 30/60/90 plan, teach simple prompting habits, embed AI in SOPs and templates, and track a small set of metrics to prove value. The result is steady productivity gains, fewer errors, and lower risk.

What to do next

- Name an executive sponsor and a program lead.

- Publish a one-page AI use policy and an approved tools list.

- Pick 3–5 low-risk, high-volume tasks and capture baseline time/quality.

- Run AI Literacy training for all; equip team champions with a small prompt/workflow library.

- Review monthly: scale what works, fix what doesn’t, and retire what adds risk.

Start focused, measure honestly, and expand with confidence. That approach builds an AI-ready workforce without disrupting the work that matters.

Looking to put these ideas into practice with the right compliance and workforce tools?

OnBlick helps employers streamline HR, immigration, and compliance processes - making your team not only AI-ready but audit-ready too.

Book your free demo today and learn more about our product and services.

.gif)

.png)

.png)

.jpg)